if your mid-level exposure time is 1/40s, then you should have at least exposure timess of 1/10s and 1/160s the greater the range the better). For best results, your exposure times should be at least 4 times longer and 4 times shorter (☒ stops) than your mid-level exposure (e.g. Make sure the camera does not move too much (slight variations are OK, but the viewpoint should generally be fixed). Photograph the spherical mirror using at least three different exposure times.Make sure the sphere is not too far away from the camera - it should occupy at least a 256x256 block of pixels. Place your spherical mirror on a flat surface, and make sure it doesn't roll by placing a cloth/bottle cap/etc under it.I used the back of a chair to steady my phone when taking my images. A tripod is best, but you can get away with less. Find a fixed, rigid spot to place your camera.The scene should have a flat surface to place your spherical mirror on (see my example below). Tripod / rigid surface to hold camera / very stead hand (not recommended).Automatic exposure bracketing is helpful but not really needed.

ProCamera on iOS, Camera FV-5 Lite on Android (this even has auto exposure bracketing, AEB).

This is available on all DSLRs and most point-and-shoots, and even possible with most mobile devices using the right app e.g.

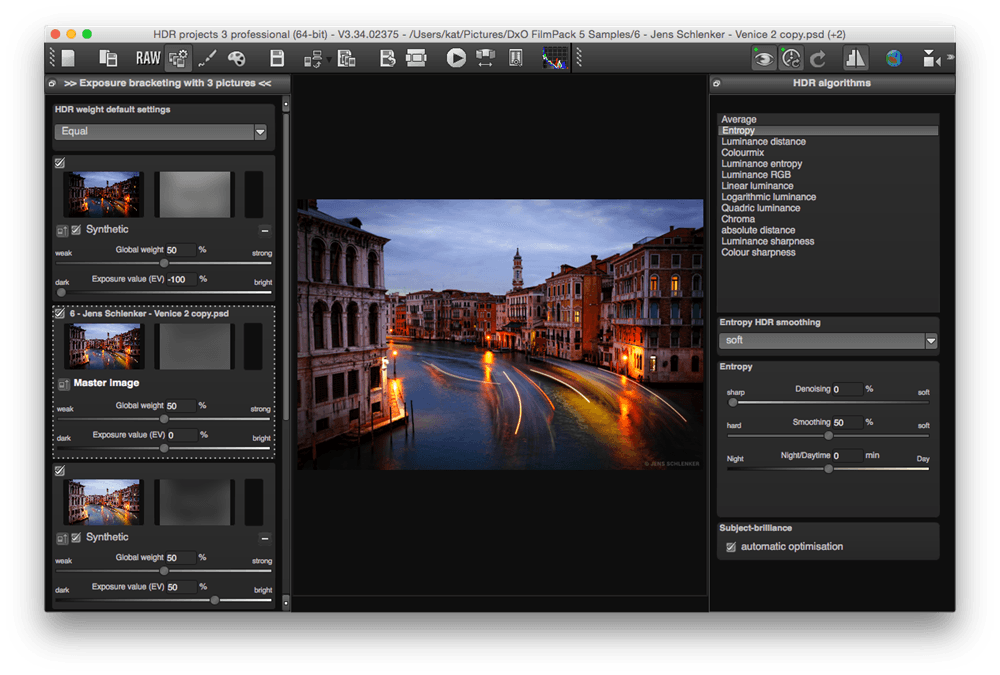

In this part of the project, you'll be creating your own HDR images. The rightmost image shows the HDR result (tonemapped for display). To the right are three pictures taken with different exposure times (1/24s, 1/60s, 1/120s) of a spherical mirror in my office. This is a very quick method for inserting computer graphics models seamlessly into photographs and videos much faster and more accurate than manually "photoshopping" objects into the photo. With this panoramic HDR image, we can then relight 3D models and composite them seamlessly into photographs. We will take the spherical mirror approach, inspired primarily by Debevec's paper. An easier alternative is to capture an HDR photograph of a spherical mirror, which provides the same omni-directional lighting information (up to some physical limitations dependent on sphere size and camera resolution). Capturing such an image is difficult with standard cameras, because it requires both panoramic image stitching (which you will see in project 5) and LDR to HDR conversion. One way to relight an object is to capture an 360 degree panoramic (omnidirectional) HDR photograph of a scene, which provides lighting information from all angles incident to the camera (hence the term image-based lighting). We will focus on their use in image-based lighting, specifically relighting virtual objects. HDR images are widely used by graphics and visual effects artists for a variety of applications, such as contrast enhancement, hyper-realistic art, post-process intensity adjustments, and image-based lighting. Most methods for creating HDR images involve the process of merging multiple LDR images at varying exposures, which is what you will do in this project. they store pixel values outside of the standard LDR range of 0-255 and contain higher precision). HDR photography is the method of capturing photographs containing a greater dynamic range than what normal photographs contain (i.e. HDR tonemapping can also be investigated as bells and whistles. By the end of this project, you will be able to create HDR images from sequences of low dynamic range (LDR) images and also learn how to composite 3D models seamlessly into photographs using image-based lighting techniques. The goal of this project is to familiarize yourself high dynamic range (HDR) imaging, image based lighting (IBL), and their applications.

Programming Project #4: Image-Based LightingĬS445: Computational Photography Note: This is an individual project you can NOT work collaboratively to generate the solutions.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed